Since 2009, parts of China have participated in the OECD’s triennial PISA testing.

In 2009 and 2012, Shanghai topped the international rankings and by quite some distance, with 15-year-olds in this Chinese city estimated to be up to two and a half years ahead of their counterparts in England.

Yet China’s participation in PISA also led to controversy.

Why was it allowed to enter just Shanghai into the study, rather than a random sample from the whole country, as other nations are required to do?

In England, London tends to outperform other parts of the country in terms of GCSE performance. Might London then rival Shanghai if it too were included as a separate entity in the PISA rankings?

Let’s look at the evidence.

London did not perform so well in PISA 2009 and 2012

In one of my papers published since the last PISA results [PDF] I tried to estimate how pupils in London performed in PISA. The results from this exercise can be found in the table below.

Actually, London did not fair too well in the results. With an average maths score of 479, reading score of 483 and science score of 497, it was below both the OECD average and the scores for the rest of the UK. In maths, pupils were three years behind 15-year-olds in Shanghai.

One obvious question to ask, then, is why is there no evidence of a “London effect” in PISA?

It’s hard to say for sure. But, by using the fact that PISA results have been linked to the National Pupil Database, I was able to find some clues. (Keeping in mind that GCSEs and PISA tests are taken just six months apart).

Most importantly, the analysis showed how pupils from disadvantaged and ethnic minority backgrounds in England were likely to do much worse on the PISA test than one would anticipate, given how they performed in their GCSEs. For instance, black and Asian teenagers scored almost 30 PISA points lower than predicted (given their GCSEs).

Of course, as an ethnically and socially diverse city, London has a disproportionately large share of ethnic minority and disadvantaged pupils. Once this has been controlled for, the difference between London and the rest of the UK was vastly reduced.

Regional variation in PISA 2015

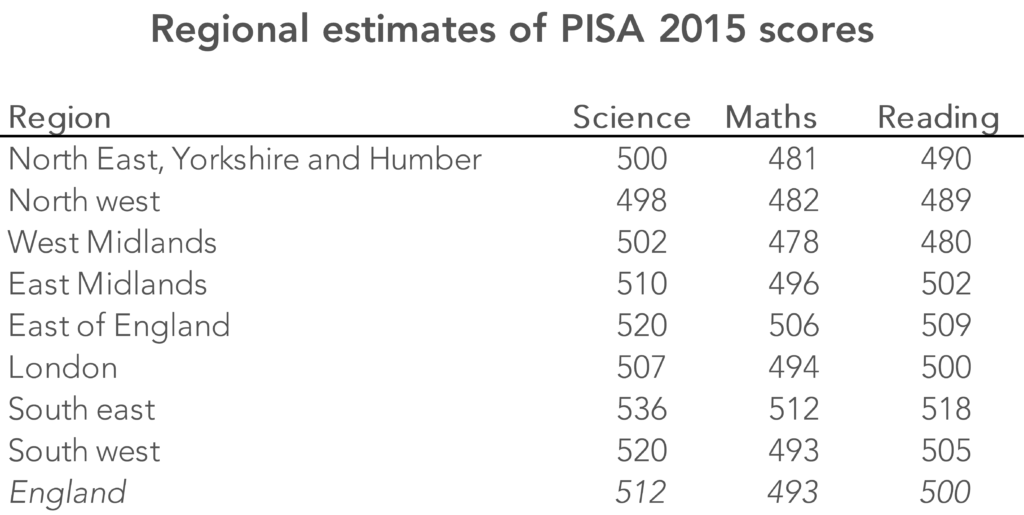

Regional variation in PISA scores in England was also something I considered in work on PISA 2015 [PDF]. The key results are in the table below.

Interestingly, London seemed roughly in line with the England average in the PISA 2015 results. Yet it was still some way behind the top-performing area (the south east), with a difference in science of around 30 PISA test points (in statistical terms, an effect size of 0.3). At the other end of the table was the north west and north east, and the west Midlands, which brought down England’s average PISA score.

Key message

So although London schools may outperform the rest of England in GCSE examinations, there is no evidence that the same is true in PISA.

More work needs to be done to understand why pupils from ethnic minority and disadvantaged backgrounds perform so differently across these two assessments.

Want to stay up-to-date with the latest research from FFT Education Datalab? Sign up to Datalab’s mailing list to get notifications about new blogposts, or to receive the team’s half-termly newsletter.

Interesting figures. I think the adjacent areas to London, that is the “South East” and “East” of England perform considerably better than the rest of the country. Although the “South West” also performs pretty well, so one could say there is a general South phenomena.

I would speculate that there is a London effect that does manifest in the PISA scores. It’s just for some reason it manifests itself in regions further outward from London’s core. This could be for a host of reasons…

– Perhaps London participants in the PISA score are concentrated in particular boroughs, perhaps the inner boroughs, rather than evenly across the 35 boroughs weighted by their respective populations. It would be great to see a more granular, say per-borough breakdown of PISA scores.

– It could be that when we describe the London effect with GCSEs, it’s the case that we’re measuring the percentage of those who pass a threshold e.g. 5 A*-C GCSE results, rather than the simply measuring the average GCSE result, including those who not only fall short of the C grade threshold, but those who fall very short of this threshold. I would posit that elsewhere in England, the range of those who perform badly in their GCSEs, likely extends to just D’s & Es, while in London there is a range that goes from the stellar straight A* student destined for a life in say medicine, to the abysmal 0% or ‘DID NOT TAKE GCSEs’ while headed for a career in gang crime and violence.

Which is better? A population of consistently above average educated people, or a population of below average educated people with say 10% being extremely educated. The minimum level of intelligence required to perform a job is continuously rising as we automate out the dumber jobs with computers & robots.