This post was updated at 8.30 AM on 22 November. An earlier draft of the piece had originally been posted in error.

What is the reasoning behind the government’s proposal for more selective schools: greater choice or better schools? Numerous studies have demonstrated that both have flawed foundations, including from the Education Policy Institute [PDF] and ourselves [PDF], yet large-scale data often fails to persuade an aspirant parent that expanding selective schools is a bad idea. They see the headline exam results at grammar schools and assume access to that school for their child will mean similar stellar outcomes. Ofsted reports don’t give us much more information – the high attainment of children on entry makes an outstanding judgement much more likely, but the headline results often mask different outcomes for different groups of children.

But what happens if we stop treating the 163 grammar schools as one homogeneous group and do some in-depth comparison of outcomes between them? Does their performance justify the elite label that many politicians have ascribed to them?

How can we compare schools?

Our starting point for comparing schools must be an acknowledgment that the type of pupils in a school greatly affects the headline exam results. Consequently, a simple comparison of secondary moderns or comprehensives with selective schools is a meaningless one. Grammar schools select on the basis of performance in a test, so it would be surprising if they didn’t perform better on overall attainment.

Instead, let’s hold some school factors constant that we know matter for results: the prior attainment of pupils at Key Stage 2 (KS2); the percentage of children eligible for free school meals (FSM) in each school; the percentage of children with English as an additional language; and the average IDACI scores of the pupil’s postcodes – a measure of neighbourhood deprivation.[1]

Using the chosen reference school (in this case, Tonbridge Grammar School) the Education Endowment Foundation Families of Schools database finds the school’s closest matches based on these factors. We weight the factors to give greatest importance to KS2 attainment and the percentage of pupils eligible for FSM.

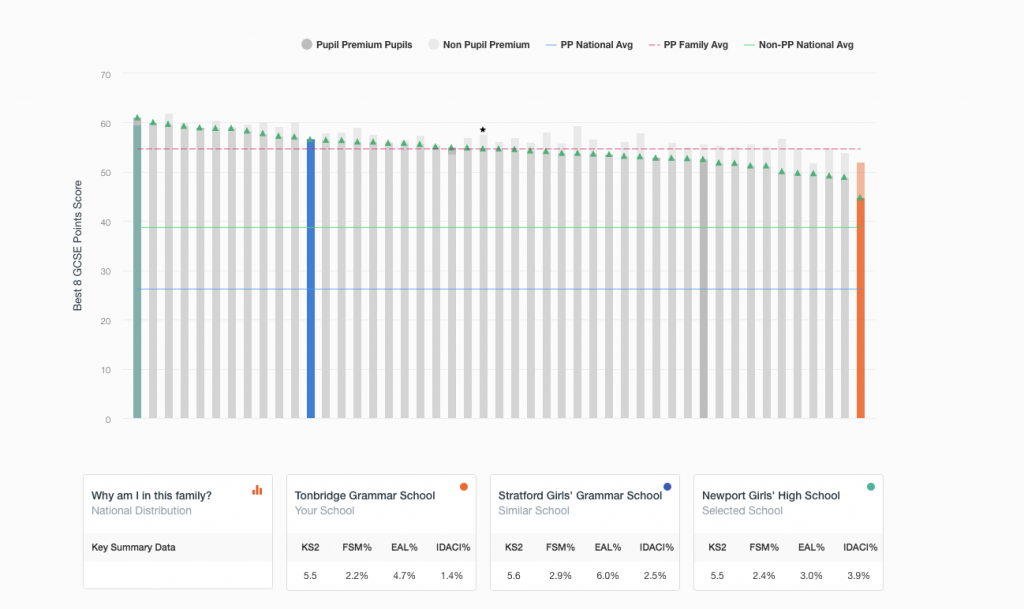

Even with these controls to make comparisons more meaningful, there is still a considerable amount of variation on key characteristics, between the schools. The family in figure 1 is comprised entirely of grammar schools. The average KS2 attainment ranges from just above 5.2 to 5.7[2]. Tonbridge sits in the middle for its intake: an average of 5.5.

Are all grammar schools doing well for their pupils?

The numbers of pupil premium pupils is small in many grammar schools and, consequently, more liable to fluctuations year-on-year, depending on the individual children in Year 11.

DfE performance tables rightly suppress data for pupil groups where numbers are small but this means that a substantial number (around one third) of grammar schools have their Pupil Premium data suppressed.

The EEF Families of Schools analysis focuses mainly on data aggregated over three years. This means that data is suppressed for only five per cent of grammar schools and enables the performance of Pupil Premium pupils in grammar schools to be seen for a wider range of schools.

Tonbridge Grammar School is one example where Pupil Premium numbers are small and, for most single years, suppressed in performance tables.

Tonbridge Grammar (orange in the below chart) scores 52.1 on its Best 8 GCSE score, averaged over three years: the equivalent of 6.5 points for each GCSE, or four B grades and four A grades. The family’s highest performer (in green) achieves 59.6 – equivalent to roughly four A grades and four A*s, or 7.5 points higher than Tonbridge’s results overall (roughly one whole grade higher for each of the eight GCSEs). For the Pupil Premium pupils the differences are even larger.

All of the schools perform significantly above the Pupil Premium national average – signified by the green triangles and the blue line. Of course, this is completely expected given the prior attainment of pupils. However, the Pupil Premium family average – signified by the red line – enables a simple comparison within the family. Of course, it is mathematically impossible for all schools to be above the family average, but a more desirable distribution would be to have less variation either side of the line.

Best 8 GCSE outcomes for Tonbridge Grammar School’s family

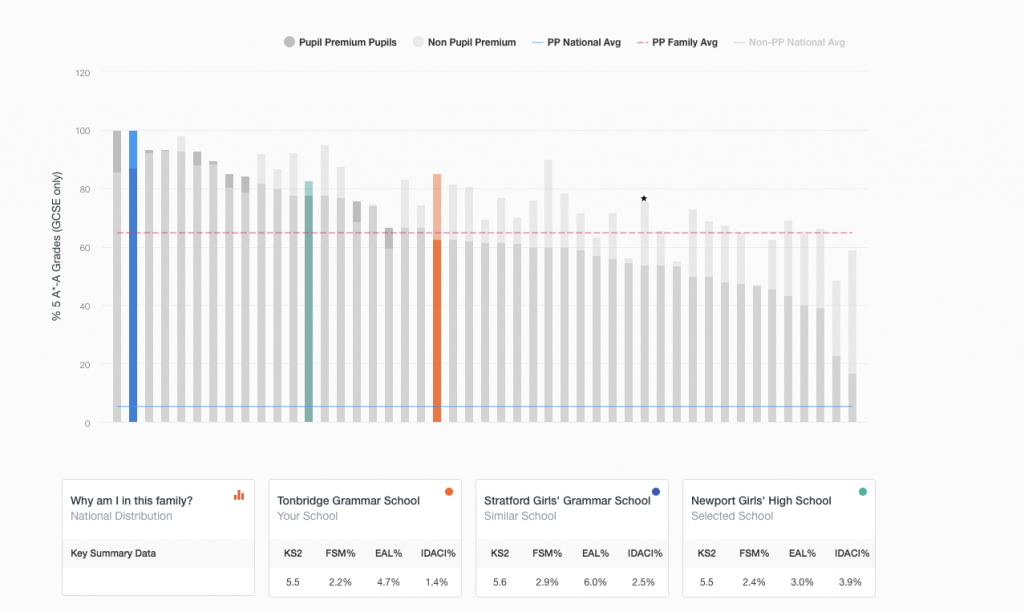

The story is even more pronounced for A* and A grades (seen in the below chart). Here, Tonbridge Grammar is in the middle of the group but some schools in the group are achieving only 10% of PP A*A grades whereas others are achieving 100%.

Percentage of pupils achieving five A/A* grades

Do Pupil Premium pupils make more progress in grammar schools?

Secondary schools with a large percentage of pupils who score highly on KS2 exams have higher value added scores: it is one of the reasons why Progress 8 seems to favour schools with more advantaged intakes. However, if we group schools into bands based upon average KS2 scores, the picture is less clear-cut.

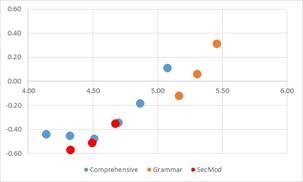

We group schools into eight bands starting at 4.0, with each band covering 0.2 of a KS2 level (i.e. band 1 is <4.2; band 8 is >=5.4). Unsurprisingly, we find that grammar schools cover bands 6, 7 and 8: the highest average intakes. Comprehensives are represented in band 1 to band 6[3] only. Secondary moderns (at least, those identified as so on EduBase) go from band 1 to band 4. However, the schools in band 1 only have 99 KS2 Level 5 Pupil Premium pupils over five years, so have excluded these from the below chart.

This chart shows how value added for Pupil Premium pupils who are at Level 5 at KS2 varies according to school group based on KS2 attainment. Each point represents at least 800 pupils, up to a maximum of 48,000 pupils.

Average KS2 level (x-axis) against value added score (y-axis) for Pupil Premium pupils

The graph shows that where the average KS2 level of school is 4.5 – the national average – or higher, value added scores increase as the attainment of intake increases.

However, at the lower end of grammar school KS2 intakes – where the average attainment level is less than 5.2 – comprehensives with similar intakes have higher value added scores. It is only at the fuzzy boundary of average 5.2 KS2 scores in band 6 that we can directly compare comprehensives and grammars, because only here are there comparable types of pupils.

The reality is of course, that these high KS2 intake comprehensives are located in highly affluent neighbourhoods and beyond the catchments of most Pupil Premium pupils. Surely this is an argument for expanding the access of high end schools to poorer pupils? Well, not if we heed the lesson from the other end of the graph. Here, outcomes for pupils at secondary moderns fall sharply away, even lower than comparable comprehensive schools. The nature of selection’s zero sum game is laid bare in this graph: for one child to benefit at a grammar, another child must lose in a secondary modern.

What about the attainment gaps within grammar schools?

The variation between schools is clear, but what about the variation within schools? The within-school gap persists in all types of school, although it is slightly smaller in grammar schools than in comprehensives. The numbers of Pupil Premium pupils in grammar schools are small – as low as one per cent in some schools – so these gaps do not involve very many pupils at all. However, their outcomes are still, on average, worse than their wealthier peers in the same school.

However, even here we are limited in the comparisons we can make. There is not a straight comparison between FSM-eligible pupils in grammars and comprehensives. Using five years’ worth of data to build up a more accurate profile, Pupil Premium pupils in grammar schools are less disadvantaged than in comprehensives. On average, they have been FSM-eligible for 43 per cent of their time compared to 59 per cent for comprehensives.

Furthermore, grammar schools have a relatively low proportion of Pupil Premium pupils who are long-term disadvantaged (defined as FSM-eligible for 80+ per cent of their school career) compared to comprehensives: 18 per cent vs. 35 per cent. This matters a great deal. As a previous FFT study has shown, the number of years a child is FSM-eligible is correlated with GCSE performance [PDF]. Poverty experienced over a long period leaves an indelible mark on a child’s school career. A lower trajectory of achievement is not inevitable for long-term disadvantaged pupils, but it will require exceptional schools to change it. Even if all grammar schools were capable of doing this (which the above analysis suggest they are not), it is clear that long-term disadvantaged pupils are not able to access them in anything like the numbers needed to overturn the attainment gap.

What does this mean for policy?

If we are going to cherry pick a small group of schools, such as England’s 163 grammars, and hold them up as an example of what all schools could achieve, we could easily pick brilliant comprehensives such as this one or this one.

The lesson from Families of Schools is that all school types – grammar, comprehensive and secondary modern – have star performers and underperformers.

The expansion of selection won’t change that, but it will create a distraction from a more fundamental problem of school improvement across the spectrum. A more constructive policy would be to initiate the challenge and support required to reduce the variation between schools. Simply replicating the structure of how schools admit pupils is not a recipe for better attainment.

Want to stay up-to-date with the latest research from Education Datalab? Follow Education Datalab on Twitter to get all of Datalab’s research as it comes out.

1. This is intended to be a simple comparison tool, not a fully controlled regression model.

2. This is because grammar schools have so few pupils eligible for FSM that this factor exerts a greater weighting on the creation of the families, than in other types of schools.

3. There were four comprehensive schools in band 7, all in Hertfordshire so we have excluded these.

Hi,

What is the source for the “Average KS2-KS4 Progress across four selective LAs” graph in the Research Briefing? It would be interesting to see how this was worked out.

Hi Bob,

Thanks for your question. The figure comes from our analysis of the National Pupil Database, and is KS2-to-KS4 value added, based on pupils’ best eight GCSEs.

Kind regards,

Philip