The biggest criticism we hear about Progress 8 is that it is biased against schools with disadvantaged intakes.

This isn’t a problem unique to Progress 8. Any measure of attainment would be too. Progress 8 is fairer than some because the impact of prior attainment is removed. But it stops short of being fair with regard to other factors known to have an effect on attainment, particularly disadvantage.

This only really becomes a problem if Progress 8 scores are used as measures of school effectiveness, which they are not as we set out here. They don’t have to be interpreted this way. The original stated aim of school performance tables under the Citizen’s Charter was one of transparency.

There is a technical solution to the problem: contextual value added (CVA). But how would this work? And should we do it?

How do you define CVA?

CVA scores used to be published by the Department for Education until the Coalition government binned them, claiming that it “entrenche[d] low aspirations for children because of their background”. I’m not sure it did, but I will leave that for another day.

But it’s certainly true that CVA was hard to explain and that the scores were somewhat unstable – schools’ scores appeared to jump about from year to year.

The problem with CVA is that there is no “right answer”. CVA scores depend on the statistical model used. By statistical model, I mean which factors are included (prior attainment, gender etc.) and the method by which the factors are mashed together. If the model changes, the scores change.

There are some factors that we could probably all agree on: gender, month of birth, ethnic background, first language and disadvantage, particularly if the latter is based on pupils’ full school histories so that we can differentiate between those who are long-term disadvantaged and those who are only briefly disadvantaged.

But there are other factors which may not be so clear cut. Special educational needs status would be a good example because this can change over time and, in some cases, is within the school’s gift to decide. Under the old CVA measure the government used to produce there was a perverse incentive to over-identify pupils as having SEN because it lowered benchmark scores.

Then we have the question of whether to include school characteristics. For example, we know that schools’ Progress 8 scores are correlated with the mean Key Stage 2 of the cohort, and grammar schools are the main beneficiaries. Tom Perry from Birmingham University suggests this is a “phantom” effect caused by measurement error and proposes including the mean Key Stage 2 score of the school cohort in the calculation of value added scores as a way to fix it. So it would make sense to include it in a CVA measure.

FFT Aspire

You can see contextualised value added scores for your school on a range of measures, including Attainment 8, in FFT Aspire

Not an FFT Aspire user?

Schools Like Yours

Want to compare Progress 8 scores for schools with similar pupil intakes? We’ll be updating our Schools Like Yours site tomorrow with provisional 2019 data

Other school-level measures, such as the percentage of disadvantaged pupils in the school cohort could also be included. Rather than comparing pupils in a school to similar pupils in the rest of England, we would now be comparing similar pupils in similar schools.

This is an important distinction. What if these school-level factors are correlated with the thing we’re trying to measure i.e. school effectiveness? What if, for instance, grammar schools were able to recruit more effective teachers? By adjusting for school characteristics we would be cancelling this out.

We also have the question of how factors are included. What is the nature of the relationship between each of the factors and the outcome? To get technical for a moment, do we use linear or multilevel regression? To what extent do we allow for interactions between factors (e.g. ethnicity, disadvantage and prior attainment) and so on?

The point here is that there are considerable researcher degrees of freedom. I can define my model one way, test its output and think it looks reasonable. Other researchers would say the same about their models.

Differences in approaches by different researchers lead to different school CVA scores, and these differences can sometimes be large given the range of school-level scores.

Some results

For the purposes of this post, I’ve defined my own CVA model.[1] It contains some of the same pupil-level factors as the old DfE model but there are some differences too.[2] For example, I’ve included SEN status at the end of Year 6 rather than end of Year 11, and I’ve included the percentage of a pupil’s school career spent in receipt of free school meals instead of a whether they were eligible for free school meals in Year 11.

I’ve also defined a second model which includes some school-level factors.[3]

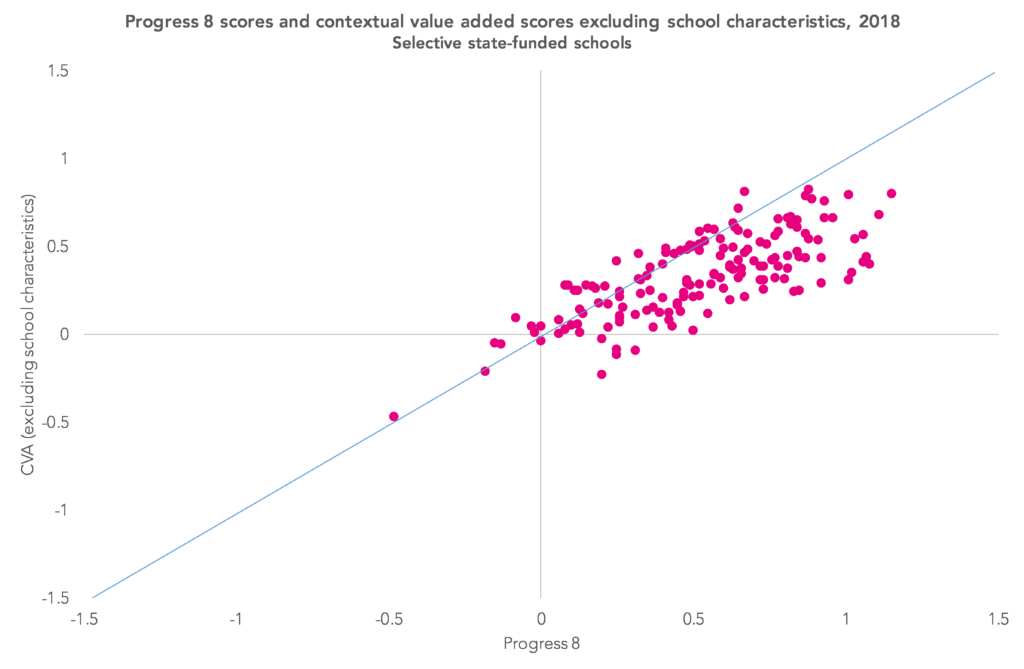

Let’s take a look at what happens when we calculate CVA leaving out the school-level factors. The chart below looks at grammar schools, which tend to do rather well under Progress 8. As you can see, the CVA scores tend to be lower, although for the most part remain positive.

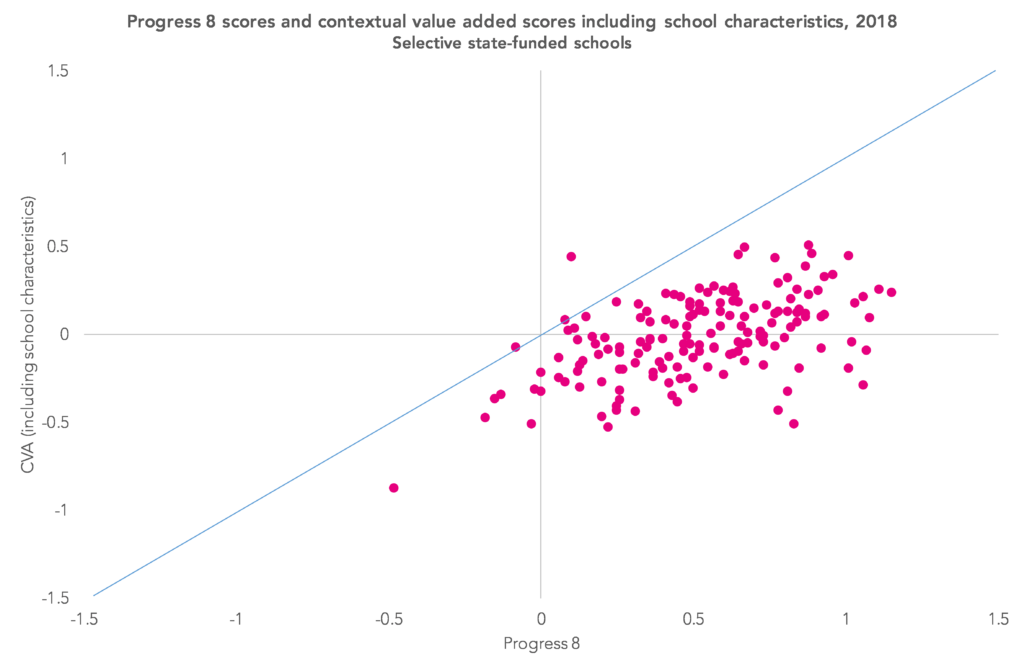

Now let’s add the school-level factors. This tends to reduce the scores even more. There is a broadly even split of schools with positive and negative scores. This is because we are pretty much comparing grammar schools to other grammar schools (or at least other schools with high attaining intakes).

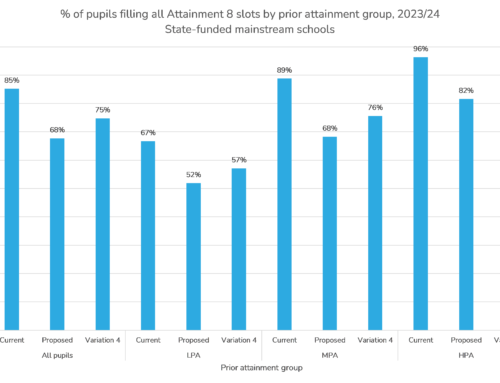

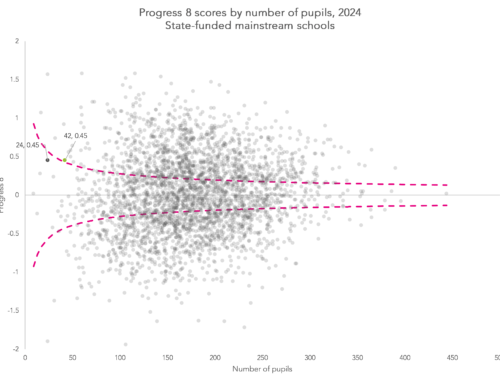

Finally, let’s look at the distribution of scores for all schools. The chart below plots the CVA score of every school, with those schools ordered from lowest to highest CVA scores. There are some outliers with extremely high or low scores (some of which are small schools with unreliable scores) but 90% of schools are between -0.5 and +0.5 (for P8 the comparable proportion of schools is 73%).

The difference between -0.5 and +0.5 is on the large side – it’s equivalent to a whole grade in each of the 10 slots of Attainment 8.

But between the 30th and 70th percentiles (between -0.15 and +0.15), the difference is not so great. It is equivalent to three grades higher across the 10 slots of Attainment 8. It’s at this point where we start to wonder whether we are seeing a genuine difference in attainment or just statistical noise.

Is it worth it?

CVA can often lead to philosophical arguments about whether it reinforces low expectations, and technical arguments about the model used.

Not only that, and as I set out here, CVA isn’t a measure of school effectiveness either. It’s useless for ranking schools in order of effectiveness from best to worst.

So why bother with it?

If we are going to compare schools, it’s the fairest way of doing it. Ultimately, it shows that attainment in most schools isn’t that different. Perhaps this is the real reason it was abandoned.

Want to stay up-to-date with the latest research from FFT Education Datalab? Sign up to Datalab’s mailing list to get notifications about new blogposts, or to receive the team’s half-termly newsletter.

1. There are numerous methods for testing models. We could see which works best in terms of explaining variance and minimising bias with respect to the factors. Or we could split the data in two, derive models using the first split and work out which produces the best predictions for the second split. Different researchers would also have different ideas about which tests to use.

2. It includes mean Key Stage 2 fine grade (reading and maths) fitted as a quartic, gender, first language, ethnic background, percentage of school career eligible for free school meals, year of first registration at a state school in England, IDACI score of the area where a pupil lives, month of birth, SEN status in Year 6, whether the pupil was admitted at a non-standard time. It also includes some interactions.

3. Cohort mean Key Stage 2 score, cohort standard deviation Key Stage 2 score, % disadvantaged pupils, % pupils with a first language other than English.

This is a valuable contribution to a very difficult problem. Back in the 1990s the Cumbria LEA used a different approach to CVA by relating GCSE outcomes to mean Y7 school intake CATs scores. The rationale is the acceptance that GCSE outcomes are reliably predicted by CATs scores regardless of socio-economic and SEN factors (unlike SATs).

The LEA produced an annual chart like the one contained in this article

https://rogertitcombelearningmatters.wordpress.com/2018/11/21/pupil-premium-accountability/

Each school produces a data point on the chart. Schools above the regression line are getting better than the Cumbria average GCSE outcomes in relation to intake CATs scores and schools below the line are performing more poorly.

This approach seems to solve many of the problems that you identify. Comments please

Hello Roger. Thank you- appreciate it. We used to do similar in the local authority I worked in, collecting pupils’ CAT scores from schools and using them as a basis for value added calculations of KS4 attainment as well as looking at pupils’ whose CAT scores were out-of-kilter with their KS2 scores (either positively or negatively). I’d be all in favour of doing something similar nationally, calculating value added based both on KS2 and on measures of cognitive function (be that CAT or something else). If both scores were in agreement then you would have a firmer basis for action. From what I remember, there were still differences with respect to disadvantage, i.e. for a given CAT score, disadvantaged pupils would still tend to achieve lower KS4 results but I’d like to see more recent data.

(As an aside, I note that the correlation between CAT mean SAS and Attainment 8 was 0.73 according to this https://www.gl-education.com/media/315686/cat-and-national-test-indicators-2018-final.pdf. I assume this is based on 2017 data. That year the correlation between mean KS2 and Attainment 8 was 0.71. It would edge up a bit if we age-standardised the KS2 scores, so overall CAT and KS2 are similar in terms of predictive validity).

More generally, I think collecting measures of cognitive function at scale is necessary in order for the system as a whole to work out the most effective approaches to teaching pupils with different cognitive abilities. Becky Allen goes into this in more detail https://rebeccaallen.co.uk/2018/09/13/the-pupil-premium-is-not-working-part-iii/